GDPR compliant research tools matter because research data is not generic. Transcripts, recordings, and open-text responses from participants all contain personal data, health information, commercially sensitive insight and identifiable detail. The regulation that governs how that material is collected, stored and processed hasn’t softened since 2018, and the tools most research teams reach for now were not built with that data in mind.

AI is now standard in research workflows. It transcribes interviews in real-time, surfaces themes and turns hours of raw data and insight into structured findings. The speed is real. So is the risk that comes with moving sensitive participant data through platforms that were designed for breadth rather than research-specific governance.

GDPR compliance is frequently assumed rather than verified. A tool may carry a compliant label. That does not, however, mean the workflow behind it is safe.

In this blog, we’ll cover what GDPR compliance actually requires in a research context, where risk shows up in practice and how four commonly used tools compare when that standard is applied properly.

What makes a research tool GDPR compliant?

For a research tool to meet the GDPR standard, several conditions need to be in place.

1. A data processing agreement must exist.

When a tool processes personal data on your behalf, it acts as a data processor. GDPR article 28 requires a formal DPA between the controller and the processor. Without one, the arrangement is not legally sound, regardless of how the tool markets itself. In the simplest of terms, if you are paying for or using a tool to handle your participants’ data, you need a signed legal agreement with them that spells that out exactly. This is typically included in the terms and conditions of signing up with a tool.

2. Data transfers must be governed.

If data is processed outside the UK or EEA, a lawful transfer mechanism must be in place, typically a standard contractual cause (SCC). This applies whether the tool is US-based, cloud-hosted across multiple regions, or routed through third-party infrastructure.

3. Data subjects must retain their rights.

Participants have the right to access their data, request corrections and ask for deletion. The research tool must support the controller in meeting those requests.

4. Processing must be limited to its stated purpose.

Data collected for research cannot be repurposed for model training or platform improvement without explicit consent. This is where several general-purpose AI tools introduce risk that research teams do not realise they are carrying

5. Security must be demonstrable

Encryption in transit and rest, access controls, breach notification procedures and audit trails are baseline requirements.

Why most general tools fall short of GDPR standards

The issue with most widely used research tools is not that they ignore GDPR. It is that they were designed for different problems.

General survey platforms are built for response collection at scale. Flexible databases are built for team collaboration and data organisation. ChatGPT, Claude and other general AI tools are built to be useful across as many tasks as possible. None of those design intentions prioritises the sensitivity of participant data, the need for auditability from transcripts for finding, or the jurisdictional clarification that regulated research environments require.

The compliance gaps tend to be in the details. Data residency that defaults to US servers unless an enterprise plan is purchased, DPA available on request rather than applied automatically. AI processing that uses inputs to improve shared models unless explicitly opted out, and transfer mechanisms that rely on contractual causes rather than geographic containment.

The first time you might know that someone is not “right” will be long after you receive coherent outputs from the tool.

Where GDPR risk actually shows up in research

GDPR risk in qualitative research, or any research for that matter, does not appear as an obvious breach. It shows up in both accumulation and assumption.

For example, a researcher pastes a focus group transcript into a general AI tool to generate themes quickly. The transcript contains names, job titles and candid opinions about a client organisation. The tool processes it on an infrastructure outside the UK, routes it through several subprocessors and may use it to improve model performance depending on the plan tier. All the researcher sees is that they pasted something in and got a response out.

Another researcher stores interview recordings in a flexible database platform. The data residency defaults to US servers. Transfer mechanisms exist in the form of SCCs, but no DPA has been signed, and the researcher doesn’t know which subprocessors have access.

In each case, no deliberately wrong or unethical choice was made intentionally. The risk came from assuming compliance rather than verifying it at each point in the workflow. For more information on how that can introduce specific reliability and bias risks in research, our article AI bias in research covers that in more detail.

GDPR compliant research tools: how four platforms compare

One important thing to note is that not all tools that claim GDPR compliance were built with data research in mind. Equally, not all research tools are GDPR compliant as standard.

However, here are how four commonly used platforms compare when that standard is applied to research specifically.

Typeform

Typeform provides a DPA, uses SCC for transfers outside the EEA and supports data subject rights, including access and deletion. For straightforward survey collection at a relatively low sensitivity. It’s a workable choice. With that in mind, compliance obligations extend across every tool in the stack, not just the one collecting the data.

Qualtrics

Qualtrics has a solid GDPR foundation: a DPA with embedded SCCs, encryption in transit and at rest, granular deletion tools, and pseudonymisation support. It also has ISO 27001 and SOC 2 Type II certifications. UK and EU data residency is also available. It does, however, require the correct configuration, and Qualtrics remains explicit that the responsibility remains with the researchers for applying its tools correctly. For a smaller agency or independence, that burden of configuration is real.

AirTable

Airtable has a DPA, EU SCCs, ISO 27001, ISO 27701 and SOC 2 Type II certifications, it also supports deletion requests and audit logs. The significant limitation for UK and EU teams is that while EU Data residency is available, it’s paywalled on the Enterprise Scale plan. On all other plans, data is stored on US-based AWS servers by default. For teams working with sensitive participant data in regulated sectors such as healthcare or legal, that is a meaningful restriction.

Beings

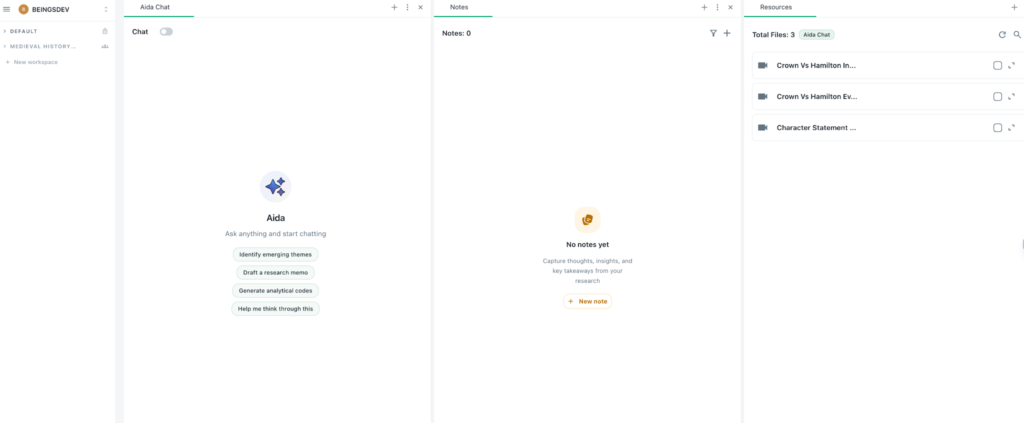

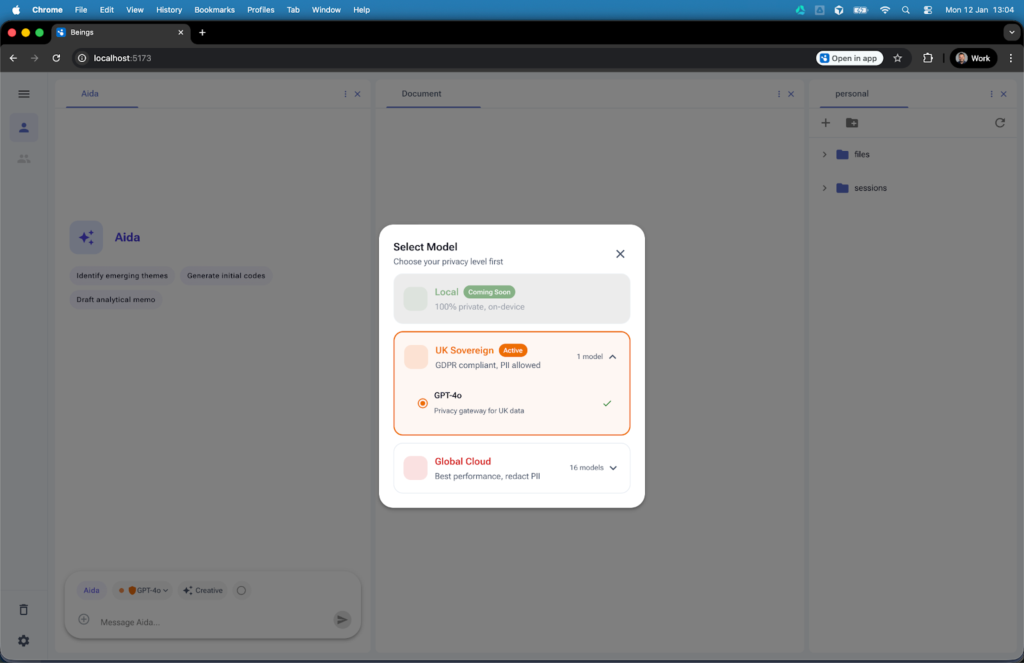

Beings is GDPR compliant by design and not by configuration. It is built specifically with qualitative research workflows at its forefront, and not as general data collection. The platform offers research-grade data governance and qualitative analysis is built into the architecture from the start.

What is different from other tools on the market is that Beings processes data on UK-based infrastructure. Participant data stays within UK jurisdictions without requiring a higher-priced enterprise plan, or difficult manual configuration. It is the starting position, and the ability to switch and change is managed by a clear, and simple, traffic light system.

What’s more is that Beings’ AI engine, Aida, operated on the Ground Truth principle. Analysis is restricted to the material uploaded within a specific project corpus. Aida does not pull in external knowledge unless explicitly stated. This means that it doesn’t blend data across projects and does not use research material to train shared models. Every theme and insight it delivers is linked back directly to the source transcript, recording or document it came from. Interpretation and judgement, while guided, are firmly with the researcher throughout.

How to choose a GDPR compliant research tool

Before committing to any tool, ask:

- Is a DPA in place automatically, or does it need to be requested?

- Where is data processed and stored by default?

- Does the tool use research data to train AI models?

- Can client project data be separated by design, not just by convention?

- Can individual participant data be deleted cleanly when requested?

For qualitative research involving transcripts, recordings and open-ended participant responses, particularly in regulated sectors such as healthcare, legal or enterprise environments, the tool needs to have been designed for that context.

For teams handling enterprise-level research or agency work across multiple clients, project separation is enforced by design. You can try Beings for free or contact the team to discuss your specific requirements.