Literature reviews have always been one of the most labour-intensive parts of academic and professional research. Reading papers, tracking citations, synthesising findings across dozens of sources. Today, AI tools have started to become a useful option for literature reviews, but the options vary significantly in what they actually do, how reliable they are, and where they fit into a real workflow.

This guide breaks down the best AI tools for literature reviews available right now: what each one does, where it performs well, and where it falls short.

What is an AI literature review tool?

An AI literature review tool is software that uses artificial intelligence to help researchers find, analyse, organise, or synthesise academic papers. Some AI tools focus on discovery, for example, helping you find relevant studies you might otherwise miss.

Others are built for analysis, such as helping you understand and compare papers you’ve already gathered. A few try to do both.

Not all tools operate the same way. When it comes to AI tools some of the ways it can help with literature reviews include:

- Search of live academic databases

- Search function to look inside documents you upload

- Generation of summaries and drafting of text

- Surfacing of citation relationships or flags where studies support versus contradict each other.

The right AI tool depends on where you are in the review process and what you most need help with.

What to look for in an AI literature review tool

The best AI literature review tools vary widely in what they offer, from paper discovery and citation analysis to document synthesis and team collaboration. Here are some features and use cases to consider before selecting a tool:

1. Accuracy and grounding

Does your AI tool of choice cite real papers? Does it link claims directly back to sources so you can verify them? Hallucination is a real risk with general-purpose AI tools, and for academic work, unverifiable claims are worse than no help at all.

2. Coverage

Where does the AI tool pull its data from? Some search Semantic Scholar and PubMed. Others only work with what you upload. Coverage gaps matter, especially for niche topics or older literature.

3. Fit to your workflow

Are you at the discovery stage or the synthesis stage? A tool built for finding papers won’t help much if you already have your corpus and need to extract themes from it.

4. Cost and team access

Some AI literature review tools charge per seat, which adds up quickly for research teams. Others offer flat-rate or shared access. This matters more than it might seem for collaborative projects.

5. Citation traceability

For academic work specifically, every claim needs to be traceable. Tools that link answers directly back to specific passages in source documents save significant time during the verification process.

When to use AI for literature reviews

AI tools are most useful for the following stages of research:

- Scoping a new topic quickly, especially when you’re unfamiliar with the field

- Finding adjacent or overlooked papers you wouldn’t have reached through keyword searching alone

- Extracting and comparing findings across a large corpus of documents

- Spotting citation patterns to see which papers are heavily supported, which are contested

- Producing first-draft summaries that you then refine and verify

AI tools are less suited for final systematic reviews that require reproducible Boolean search strategies, or for any situation where the quality of sources hasn’t been manually vetted. They work best as accelerators at the early and middle stages of a review, not as a replacement for expert judgement at the end.

Best AI tools for literature reviews

The best AI tools for literature reviews fall into two broad categories: tools that help you find the papers, and tools that help you analyse them. Knowing which stage of your review you’re at will determine which tool actually saves you time.

ChatGPT

What it is: ChatGPT is a general-purpose AI assistant from OpenAI. It isn’t designed specifically for literature reviews, but its paid tiers (Plus, Team, and Pro) unlock Deep Research mode, a feature that searches the web broadly, synthesises information across many sources, and produces detailed reports with citations.

Pros:

- Deep Research mode can surface a large volume of recent papers and synthesise them into a coherent report

- Strong at academic writing, paraphrasing, and drafting review sections once you have your material

- Memory features allow it to build on previous conversations

- Can be customised with instructions to match specific citation styles or writing preferences

Cons:

- Not purpose-built for academic literature; sources pulled via web search rather than curated academic databases

- Less control over which databases are searched or how sources are prioritised

- No specialised citation analysis or Smart Citation features

- The research process can’t easily be re-run or audited once a report is generated

Best for: Researchers who want a general-purpose assistant that can also handle writing, and who need broad discovery rather than precise academic database searching.

NotebookLM

What it is: NotebookLM is Google’s document-grounded research tool. You upload your own papers, PDFs, videos, and other source files, and the AI works exclusively within that material. Be it answering questions, generating summaries, producing timelines, and creating audio overviews, all tied directly back to your uploaded sources.

Pros:

- All responses are grounded in your uploaded documents, with inline citations linking back to exact passages, significantly reducing the risk of hallucination

- Analyses multiple documents simultaneously, which makes cross-paper synthesis much faster

- Supports multiple formats: PDFs, Google Docs, YouTube URLs, audio files

- Free tier includes access to core Studio features, Video Overviews, and Audio Overviews, though daily usage is capped (3 Audio and Video Overviews per day)

- Collaborative features let you share notebooks with colleagues

Cons:

- Cannot search for new papers. It only works with what you upload, so you need to find your corpus elsewhere first

- Occasional inaccuracies in citations, including page mismatches, mean outputs need careful verification

- Notebooks are independent; there’s no cross-referencing between them

- Can oversimplify nuanced arguments, particularly in highly technical fields

- No dedicated mobile app

Best for: Researchers who already have their papers and need to synthesise, interrogate, and extract themes from them quickly. Works well in combination with discovery tools.

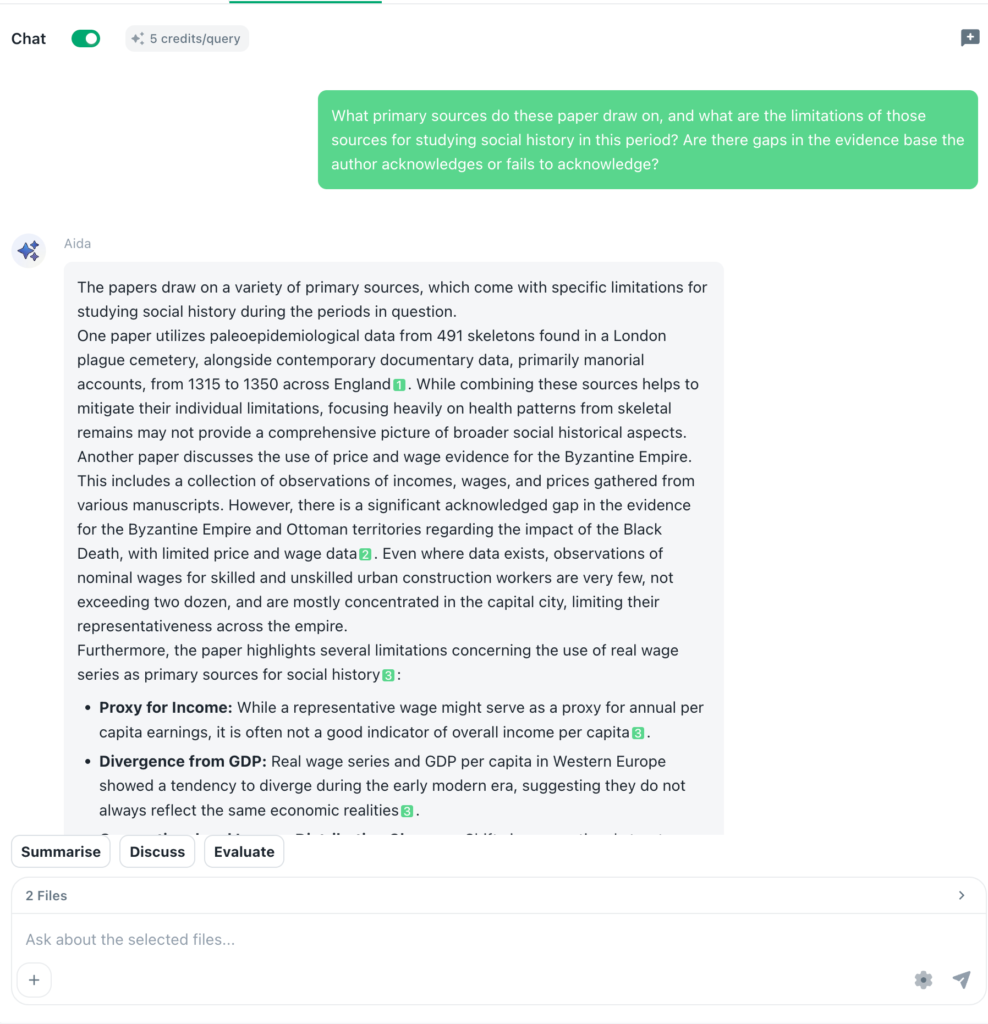

Beings

What it is: Beings takes a different approach to AI-assisted research. Rather than searching external academic databases, it’s built for analysing large collections of documents you bring to it, including internal research files, proprietary reports, and uploaded papers, finding information across all of them simultaneously. Everything it surfaces is grounded in your source material with no risk of hallucination, and it links every piece of information directly back to the citation it came from.

Pros:

- Analyses multiple documents at the same time, making it well-suited to literature reviews where you’re working across a large corpus of files

- Ground-truth analysis means outputs are tied directly to source material, with no generated claims that can’t be verified

- Citations link directly back to the specific passages they come from, making it easy to check the basis of any finding

- All data is processed in the UK, which matters for teams handling sensitive documentation or working under strict data governance requirements

- Cost-effective for teams. Flat pricing with no per-seat charges means the whole team can access the same workspace without costs scaling with headcount

- Work can be shared directly with colleagues, supporting collaborative review projects

Cons:

- Does not search academic databases; you need to bring your own documents

- Less suited to the discovery phase of a review, where you’re still finding papers

- Better positioned as a synthesis and analysis layer than a standalone end-to-end tool

Best for: Teams working through a large document corpus who need reliable, citation-backed analysis without paying per user. Also particularly useful for organisations doing proprietary or internal research reviews where the source material isn’t publicly searchable, or where data governance and jurisdiction matter.

Scite

What it is: Scite is a citation intelligence platform built around what it calls “Smart Citations”. Rather than simply counting how many times a paper has been cited, it classifies each citation as supporting, contrasting, or mentioning. This means that you can see at a glance whether a paper’s findings have been reinforced or challenged by later research. It indexes over 1.6 billion citations across 280 million sources, and has direct agreements with major publishers, including Wiley and SAGE, for full-text access.

Pros:

- Smart Citations are genuinely unique: seeing whether a finding is supported or contested is one of the most time-consuming parts of a literature review, and Scite surfaces that information directly

- Scite Assistant (AI chatbot) answers research questions with verifiable citations drawn from real papers, not generated from thin air

- Custom dashboards let you track key papers, authors, or topics and receive real-time alerts when new citations appear

- Browser extension overlays citation context while you’re reading papers online

- Works with Zotero for reference management

- Covers humanities and social sciences as well as STEM

Cons:

- No free tier. The individual plan starts at around $20/month

- Citation coverage can be uneven for older literature and some disciplines, where full-text access is limited

- The AI assistant produces shorter, less synthetic summaries than some competing tools. It’s better for targeted citation analysis than broad overview generation

- The interface has a learning curve, particularly for researchers used to traditional Boolean search tools

Best for: Researchers who need to evaluate the strength and reliability of evidence around a specific claim or paper, and who want to understand where the scientific consensus actually sits.

Undermind

What it is: Undermind is a scientific literature search and synthesis tool built by MIT researchers. It connects to Semantic Scholar (which aggregates PubMed, ArXiv, and other sources) and uses an iterative, adaptive search process, running multiple rounds of keyword, semantic, and citation searches that adjust based on what it finds. The result is a detailed report delivered by email that includes relevance scores, a citation network, a timeline, and an AI-generated narrative review.

Pros:

- The adaptive search methodology is well-suited to complex, multi-faceted research questions that keyword searching handles poorly

- Comprehensive reports include relevance scores for each paper and a citation network showing how influential each paper is within the literature

- Claims to locate around 93% of relevant papers on a given topic, according to internal benchmarking

- Searches and analyses full-text when available on the Pro plan

- No software installation required; results are shareable via links

Cons:

- Slow response time. Reports take 8 to 10 minutes to generate, which limits usefulness when iterating quickly

- Pulls significantly from ArXiv, which is primarily a preprint server, meaning some sources may not be peer-reviewed

- The free tier limits users to five searches per month

- Works best with specific, detailed queries about empirically researched topics; less suited to broad or exploratory questions

- The interface can feel cluttered once results come in, and the lack of COUNTER statistics may matter for library deployments

Best for: Researchers who need comprehensive, deep coverage of a well-defined research question and can work with a slightly slower turnaround in exchange for breadth and depth.

How these tools fit together

No single tool covers the full literature review process from start to finish. In practice, the most effective approach combines tools by stage:

Discovery: Finding relevant papers you didn’t already know about. Undermind, Scite, and ChatGPT’s Deep Research mode are strongest here. Undermind suits deep, complex queries; Scite is good for tracing citation networks outward from a known paper; ChatGPT covers broad ground quickly.

Evaluation: Assessing whether papers are reliable and relevant. Scite’s Smart Citations stand alone in this area, letting you see how the wider literature has engaged with a specific paper’s findings.

Synthesis and analysis: Extracting themes, comparing findings, and drafting review sections. NotebookLM and Beings both work well here, with the key difference being that NotebookLM is designed for individual researchers working with a mixed-format corpus, while Beings is built for teams working across large document sets who need shared access and no hallucination risk.

Find the right AI approach for literature reviews

The most important question is which tool matches where you are in the review process, not which tool is best in the abstract. AI tools don’t replace the intellectual work of synthesis. They reduce the mechanical work that precedes it: the searching, reading, cross-referencing, and organising that currently consumes most of the time a literature review actually takes. Used well, they give you more time to do the thinking that matters.

For teams working through large volumes of research who need outputs they can trust, try Beings for free and see how it fits into your literature review workflow.