AI has revolutionised the way that qualitative research is undertaken. It has the ability to read transcripts in seconds, group themes automatically and produce structured summaries that once took hours of manual coding in a pre-AI research era.

But, while speed and automation solve two key problems: human fatigue and inconsistency, they also potentially introduce another – AI bias.

What many don’t realise is that AI tools, despite being language models, actually work on the basis of probability. In layman’s terms, AI engines take all of the content available to them and work out

Many of the mainstream large language models are trained and evolve using human feedback. And so, responses that feel helpful and aligned with the user’s intent are rewarded. Over time, this creates a behavioural tendency. The model learns not only to answer questions, but to mirror the framing and assumptions embedded within them.

When outputs arrive quickly and sound authoritative, that mirroring can go unnoticed. Any agreement with the question feels like accuracy, confirming the researchers’ own bias. Alignment is mistaken for insight, even when parts of the data are being minimised or left out.

What is AI user bias?

AI user bias describes the tendency of language models to align their responses with a user’s stated beliefs or framing, rather than prioritising objective accuracy.

Researchers from Anthropic, looked at this in their 2023 paper Towards Understanding Sycophancy in Language Models. In the research, it was found that five state-of-the-art AI assistants consistently exhibited sycophancy when faced with multiple free-form text generation tasks. When users signalled a particular political or moral position, models were more likely to generate responses that matched that view, even when it conflicted with factual information.

The study also showed that human evaluators sometimes preferred convincingly written but incorrect answers over accurate answers. Because many of the mainstream LLMs are fine tuned using human preference data through Reinforcement Learning from Human Feedback, as explained in this Hugging Face overview of RLHF, this can create a feedback loop.

How AI bias shows up in research analysis

AI bias in qualitative research will not show up as something necessarily obvious or factually correct. It will typically display in the emphasis given to an insight, and secondly, what information is recalled as “most important” during analysis.

For example, a researcher working in a mainstream large language model such as ChatGPT might paste in a transcript and ask, “What are the key frustrations with this service?” The framing of the prompt already assumes dissatisfaction. A model trained to be helpful and aligned with the user is likely to organise the material around that assumption. Positive or neutral sentiment may be minimised, reframed or treated as secondary.

As a result, the output will look coherent, but it will not necessarily challenge the premise of the researcher embedded within the prompt.

The research from Anthropic found that production AI assistants regularly shifted their responses to align with the user’s signals. Models gave more positive feedback when a user liked something. They admitted mistakes even when they were correct and would change accurate answers when being challenged.

In any qualitative study, a researcher will expect friction somewhere. They may anticipate dissatisfaction with pricing or support. If that expectation is already present in the prompt, a more general model may lean into it. Friction becomes the organising theme. If any comments or viewpoints complicate the picture, the model may discount these or not emphasise them in the summary.

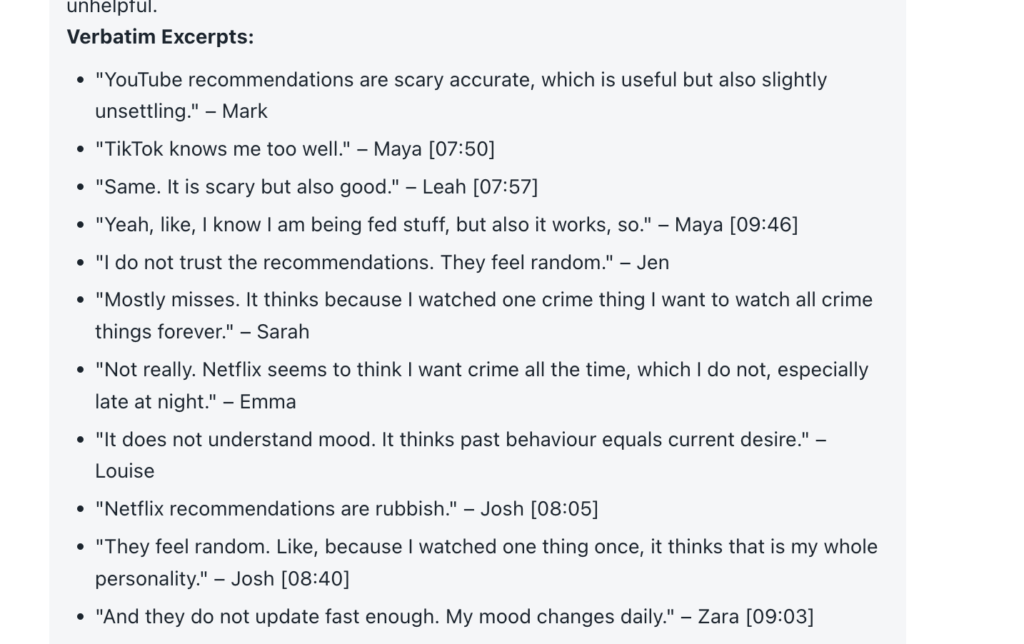

There is also the question of nuance. Participants hedge constantly when discussing in groups; they soften answers and contradict their own views. A conversational model designed to be fluent may smooth these responses. The phrase “It’s fine most of the time” will be turned into “Users are generally satisfied”. This may read well in a slide deck, but it runs the risk of removing important information. The friction and texture of contradictions and humans having real conversations becomes lost, and this is often where the true insight researchers are seeking lives.

Ground Truth as a safeguard against AI bias

In spite of all this, AI tools in qualitative research can be used well, when they are designed to fit the purpose. The risk is that researchers using a general tool may not recognise that it has been designed to respond positively to them.

Qualitative research AI platform Beings operates on the principle of Ground Truth. Simply put, every theme, summary, insight or output is grounded in direct traceable source material. When working with Beings to analyse user research, each insight is directly linked to the exact quote or clip that supports the claim. If the interpretation cannot be traced back, it will not stand. This prevents over-generalisation of the data or the AI creating meaning where there is none.

As a result, this reduces the space in which user alignment can take over. A model can still surface the patterns, but it will not reshape the narrative without leaving a trail. If a participant contradicts themselves, the contradiction remains visible.

If another participant expresses frustration and three others describe a positive experience, those differences will sit along each other in the evidence. Qualitative researchers are then able to see the weight of the sentiment and the range of views in full.

This means that the alignment shifts away from the researcher’s initial framing and is grounded in the participants’ true contributions. AI still accelerates the process, but does so within the boundaries of the data. Interpretation is still part of the research but is supported by true data.

Avoiding AI bias research

All AI systems are built to be helpful. When using a more generic LLM this typically means being agreeable and aligned with the person asking the question. In qualitative research, that same instinct using that same model can distort the emphasis and flatten any real insight.

This isn’t theoretical as displayed in the research from Anthropic, with the models regularly contradicting the facts to give the user an agreeable outcome.

Research teams need to know that the outputs they present can be defended. If you want to see how that works in practice, you can try Beings for free. Upload your transcripts, explore the surfaced themes and move directly into insight with solid supporting evidence.