Within legal teams, AI tools can be used within contract analysis in order to summarise long agreements, extract clauses, flag risks, and point out discrepancies between versions. What it cannot do, is replace the judgment a lawyer brings to a contract. Anyone promising otherwise is overselling.

What is AI-assisted contract review?

AI-assisted contract review uses machine learning and large language models to read contracts quickly, flag what matters, and compare one document against another or against a template. It doesn’t replace a lawyer in the process but it does give them a faster first pass.

Summarising long agreements

The most common use of contract analysis, and usually the first one lawyers try, is feeding a long agreement into AI, and asking for a summary. The AI tool draws out the main commercial terms, key obligations and anything that looks unusual. For a standard NDA or a routine vendor contract, this can cut the first review from twenty minutes to just two.

Where it may start to experience a wobble is on longer, more bespoke agreements. A 60-page master services agreement with heavily negotiated schedules is a different ask from a standard-form contract.

Risk scoring and flagging

Most contract analysis AI tools will score a contract against a set of criteria, either a built-in playbook or one your team has trained it on. Clauses that fall outside your standard positioning get flagged. So do missing clauses, unusual language, and terms that shift risk in a direction you’d normally push back on.

For commercial teams reviewing a steady flow of inbound contracts, procurement and vendor management, especially, this is a feature that actually saves meaningful time. You stop reading every contract from the top and start reviewing the flagged section first.

Where it falls down is anything novel. As one lawyer put it bluntly in a recent r/legaltech Reddit thread, AI tools will miss clauses with language they don’t recognise, even when given a playbook to work from. And in legal, there’s a lot of language your AI tool may not recognise.

Clause extraction

Clause extraction is the most mechanical of tasks. You point the tool at a contract and ask it to pull every instance of a specific clause type, say every indemnity or every limitation of liability.

For contract repositories and due diligence work, this is where AI can really make things easier. Running 200 NDAs through a tool to find every non-standard confidentiality carve-out is more efficient than asking a junior associate to read all 200 by hand.

The catch is consistency. Contracts drafted across jurisdictions or in different house styles produce inconsistent results, because the tool might pull what looks like an indemnity clause and miss one worded as a hold-harmless doing the same job under a different name.

Finding discrepancies across versions

Contract negotiations generate a lot of versions. From draft to counsel, redlines back, counter-redlines, then a clean version that gets revised again once the commercial team weighs in. Keeping track of what actually changed, and whether a change made in one round survived into the next, is a known time-sink.

Contract analysis AI can compare versions and surface every material change, not just the tracked edits. That includes changes made outside track changes, and clauses that have been moved, renumbered, or silently deleted. This catches things manual comparison misses, particularly when track changes get turned off, accepted by mistake, or lost as a document passes through enough hands.

The limitation is straightforward. AI will flag what changed. It won’t always tell you whether the change matters. Someone still has to read the flags and decide what’s worth a redline.

How does contract analysis AI work?

Most contract analysis tools sit on top of a stack of four technologies, all worth knowing before you buy.

Large language models (LLMs)

LLMs are the layer most people mean when they say “AI”. They’re what lets a tool read a contract, summarise it in plain English, or draft a redline. They’re good at surface-level comprehension, but they don’t reason from a structured understanding of contract law. On their own, they’re not enough.

Natural language processing (NLP)

NLP is the older sibling. It predates the current wave of LLMs by decades, and it’s what contract tools have used for years to identify clauses, tag defined terms, and map how a document is put together. Where LLMs generate fluent text, NLP parses and classifies. The two work together.

Optical character recognition (OCR)

OCR converts scanned contracts and image-based PDFs into text a computer can read. It’s the foundation layer, because a lot of the contracts lawyers deal with arrive as scans of signed copies or PDFs that have been through too many print-scan cycles. Poor OCR produces garbled text, and garbled text produces unreliable analysis further up the stack.

Conversational AI for querying contracts

Conversational AI is the layer that lets you ask a contract a question in plain English, and get an answer back. It lowers the bar for non-specialists, but the answer is only as reliable as the underlying retrieval, and the prompt given. The question to ask any vendor: when the tool answers, can you click through to the exact clause it drew from? If the answer is no, you’re back to verifying every response by hand.

How accurate is AI at reviewing contracts?

Accuracy is the question lawyers circle back to, and rightly so. A contract analysis tool that misses a clause, or invents one that isn’t there, isn’t saving time. It’s creating a new kind of risk. The honest answer is that AI is good enough to be useful, and not good enough to be trusted on its own. Knowing where it breaks down is the starting point for using it well.

Hallucinations and missed clauses

Hallucination is the AI industry’s term for output that looks plausible but isn’t grounded in the source material. In contract analysis, that can mean a summary referencing a clause the contract doesn’t contain, or a risk score flagging a problem that isn’t there.

Missed clauses are the other side of the same problem. An AI tool can fail to surface a clause that’s present, particularly when the language is non-standard or the clause is buried in a schedule rather than the main body. For low-stakes review, these failure modes are an acceptable trade-off against the time saved. For anything with meaningful legal or commercial consequences, they’re the reason a lawyer still has to read the contract.

Bias in training data

Every AI tool is a product of what it was trained on. A tool trained heavily on US commercial agreements will perform differently on a contract drafted under English law, or for a sector it hasn’t seen much of. Construction, energy, and other heavily regulated industries tend to have drafting quirks that general-purpose tools handle unevenly.

The model also carries the drafting conventions of the contracts it learned from. If those conventions lean supplier-friendly over customer-friendly, the tool’s sense of what “standard” looks like will reflect that lean. A clause that’s standard in a buyer’s playbook might read as unusual to the tool, and vice versa. Contract analysis AI works best when the tool can be tuned with the kind of contracts your team actually works with.

Sycophancy and over-agreement with the prompt

Sycophancy is the tendency of an AI model to agree with whatever the person asking it is already inclined to think. Ask a contract analysis tool “is this clause unusual?” and there’s a reasonable chance it will tell you it is, even when it isn’t. This is a subtler failure mode than hallucination, and potentially a more dangerous one. A hallucinated clause is a clear error a lawyer can spot. An AI that quietly confirms your existing read on a contract is harder to catch.

The practical response is to frame prompts neutrally. Not “flag any problems with this indemnity”, which primes the tool to find problems. Instead, “summarise the scope and any limitations of this indemnity”, which asks for a description rather than a verdict.

Why human review stays central

Put the three failure modes together and the picture is clear. AI contract analysis is useful, but it produces unreliable outputs often enough to need checking. Which means a lawyer still has to read the contract.

This isn’t a controversial position inside the legal profession. It’s the default one. Lawyers on r/legaltech consistently say the same thing: they use AI as a faster first pass, and review what it produces before relying on it. A more accurate model doesn’t remove the need for review, it just changes the ratio. Less re-reading, more spot-checking, but the review step stays. For regulated teams answering to boards and auditors, an output labelled “the AI said so” isn’t a defensible audit trail.

Where AI-assisted contract analysis fits into legal workflows

The honest answer is: less of the workflow than the marketing suggests, and a different part of it than most people expect.

| What AI handles well | What still needs a lawyer | |

| Task type | Repetitive, pattern-recognition work | Judgment calls and interpretation |

| Examples | First-pass review of standard agreements, clause extraction across a portfolio, version comparison on heavily negotiated drafts | Reading a clause in the context of the commercial relationship, weighing risk against business goals, spotting drafting ambiguity that no playbook would flag |

| Where it saves time | High-volume tasks where volume is the bottleneck | Work that requires legal thinking, not just legal reading |

Using Beings for contract analysis

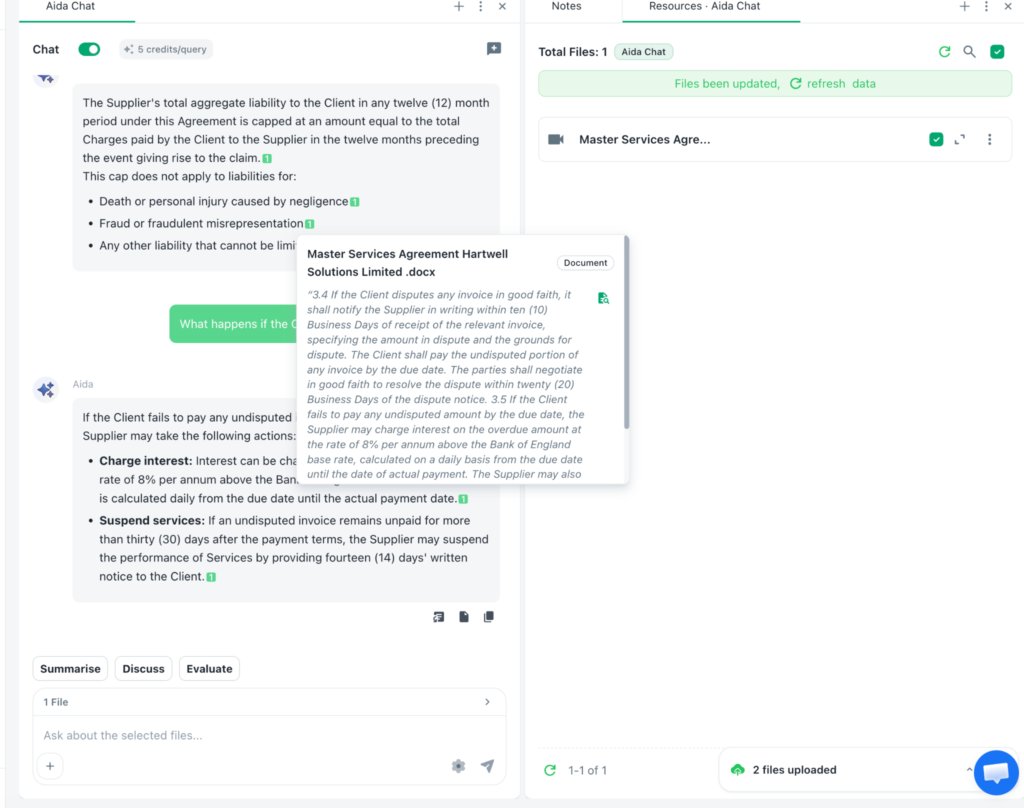

For legal teams looking for an AI tool that takes accuracy and traceability seriously, Beings is built with those concerns at its core. Aida, Beings’ AI co-partner for analysis, operates on a different principle from a general LLM. Every output has to trace back to the source material, and nothing gets invented in the gaps.

Ground-truth reasoning with direct citation traceability

The single biggest gap in general-purpose AI contract review is that you can’t defend a claim back to its source. Aida is built the other way around. Every observation, summary, or answer it produces is linked directly to the clause, sentence, or passage it came from. You click the citation, you see the source.

Under the Ground Truth principle, Aida only works with what’s actually in your contracts. If the evidence for a given claim isn’t there, it doesn’t get made. The output reflects what’s in the contracts, nothing more.

Handling messy and unstructured contract data

Most contract data doesn’t arrive in a clean, tagged, structured form. It comes as scanned PDFs, signed copies with handwritten notes in the margins, and occasionally as photographs taken on a phone. A tool that only works on clean input isn’t much use to a legal team dealing with real-world documents.

Aida handles the messy stuff. Scans run through OCR outputs before analysis. Unusual formatting and non-standard drafting conventions are parsed and interpreted rather than rejected. Documents from multiple jurisdictions, drafted in different house styles, can be analysed in the same workflow.

Analysing across multiple documents at once

A lot of contract work isn’t about a single document. It’s about the relationship between documents. A master agreement and its schedules. A framework contract and its statements of work. Due diligence on a data room full of agreements that reference each other.

Aida is built for multi-document analysis. You can upload a whole set of related contracts and ask questions across all of them. Which agreements reference this commercial term? Where are the termination rights inconsistent? What obligations from the master flow down into the statements of work? Aida does the cross-referencing and points back to the exact passages it drew from.

Secure handling of sensitive legal information

Contract data is some of the most commercially sensitive material a business holds. Deal terms, pricing, personal data covered by GDPR. A contract analysis tool that processes this data in ways the legal team can’t account for is a liability before it’s a time-saver.

Beings is built with this in mind. Data is processed in the UK, which matters for teams working under UK GDPR or advising clients who do. Pricing is flat-rate across a team, so cost doesn’t gate access when the tool needs to be rolled out widely. Retention and access controls sit with the customer, not buried in a default setting most teams never check.

For in-house teams and firms handling sensitive work, these aren’t nice-to-haves. They’re baseline requirements, and they shape which tools can realistically be used on live matters.

See how Beings handles your contract analysis

Contract analysis AI is a useful first pass, not a replacement for legal judgment. The tools worth keeping are the ones where accuracy and traceability are defaults, not enterprise features bolted on later.

Beings is built that way. Try for yourself as Aida works alongside your team as a co-partner, grounded in your actual contracts, with every output traceable back to the source. For in-house teams, commercial lawyers, and firms handling sensitive work, that’s the difference between a tool you can use on live matters and one you can’t.