AI in research is now woven into every qualitative workflow. AI is now able to translate, read and digest interview transcripts, group open text responses, identify themes in participant or patient feedback, and turn hours of conversation into structured summaries – within minutes.

Across healthcare, universities, public bodies and commercial insight teams, AI in research has reshaped how qualitative data is coded, analysed and translated into clear findings.

Using AI for research effectively still requires a deliberate approach. At its core, best practice is about keeping accountability visible, understanding how the chosen tool handles data, and treating AI output as structured input into human reasoning, rather than a finished conclusion.

Before exploring specific platforms or workflows, it makes sense to ground your research AI tool or platform with a clear set of operating principles that protect depth, challenge assumptions and maintain trust in the findings.

Three essential foundations before adopting AI in research

Best practice for using AI in research begins with recognising it as a tool within the research process, one that supports professional judgement rather than replaces it.

1. Understand how your AI tool uses your data

Before focusing on prompts, themes or speed, start with a more fundamental question. What happens to your data once you upload it into an AI tool?

Different AI tools operate under very different data policies. Some general-purpose systems, such as ChatGPT, may use inputs in ways that differ from dedicated research platforms that isolate workspaces and ringfence project data.

For anybody working in research, that difference carries weight. It affects where data is stored, who can access it, whether it contributes to model training, and how findings can be shared internally or externally.

In qualitative research, where transcripts, recordings and open text responses often contain sensitive or identifiable material, the implications are more immediate. Researchers need to know how long data is retained, where it sits, and how it aligns with internal governance and ethical standards.

These questions should be addressed early. Procurement and information governance teams are easier to involve at the beginning of adoption than after AI has already become embedded in day-to-day workflows. Before prompts, summaries or themes are generated, there needs to be confidence in how data is being handled.

2. Understand sycophancy in AI, and actively guard against it

AI user bias does not show up as an obvious error while using AI in research, but typically in overagreement.

Many generalist language models are designed to produce responses that feel helpful and aligned with the user’s intent. In research settings, that can mean outputs reflecting the framing of a question rather than fully interrogating the data. This was illustrated in a study by Anthropic, which showed many generalist models would adjust their output to agree with the sentiment and feelings of the person writing the prompts.

For best practice in using AI in research, awareness of the limitations and overexaggerations that can happen is really important.

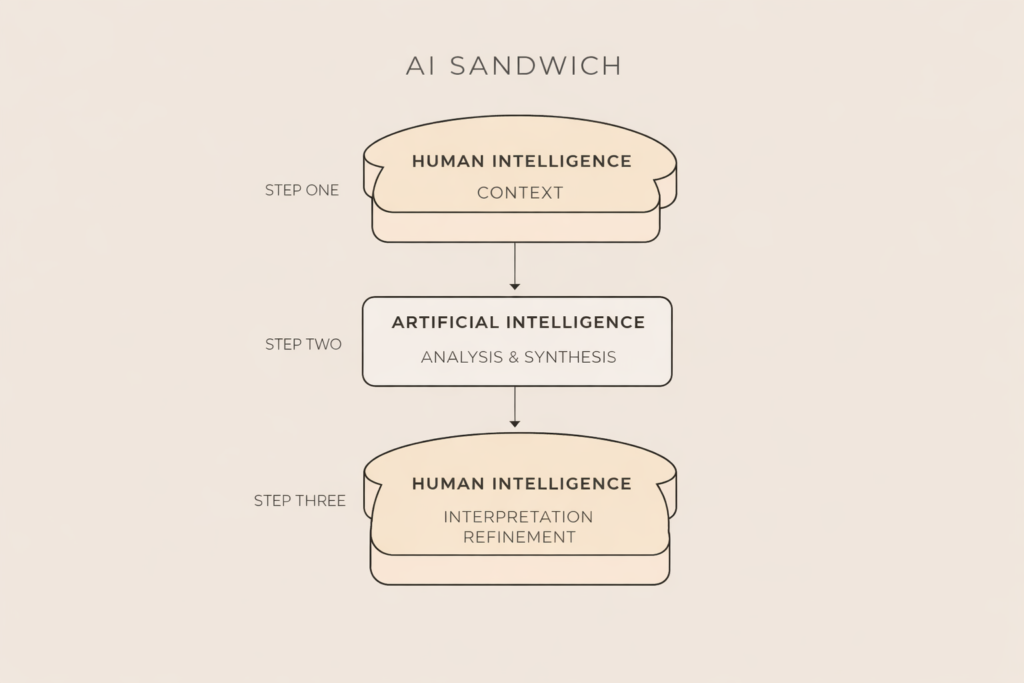

3. Use the “sandwich” model, human context around AI output

AI can cluster, summarise and structure qualitative data at scale, but responsibility for interpretation still sits with the researcher.

One practical way to approach this is to apply what has been described in educational settings as the “sandwich model”. Originally used in blended learning design, the model places independent work between two guided touchpoints. In teaching, students receive direction, engage independently, and then return for structured discussion to consolidate learning.

Applied to AI in research, the principle is similar. Place AI output between two layers of human judgement. The first comes before analysis and sets context, objectives and constraints. The second comes after analysis and interrogates, challenges and refines what the model has produced.

In practice, this could mean clarifying the decision the research is meant to inform before uploading any data. It could mean asking the model to highlight gaps or uncertainty in its themes. It could mean revisiting key transcripts once patterns are generated to check whether nuance has been compressed or overlooked.

How to use Beings’ qualitative AI tool effectively in research

For teams that want structure around AI in research rather than open-ended experimentation, Beings is designed specifically for qualitative workflows, which means the guardrails and transparency mechanisms sit closer to the research process itself.

Here is how to use it in way that mirrors best practices:

1. Start a new project with a clear research context

Begin by creating a new project and uploading your source material, whether that is interview transcripts, focus group recordings, survey open text responses or mixed qualitative inputs.

Before asking for analysis, add context.

Confirm:

- The purpose of the research

- The audience for the finding

- The decisions this research will inform

- Any known constraints or hypotheses

When working with Aida, Being’s AI research assistant, the quality of output improves significantly when objectives are clearly stated. AI in research performs better when it understands not just the data, but the decision environment around the data.

This is the first layer of the sandwich model. Human judgement frames the task before AI begins its work.

2. Instruct Aida to evaluate

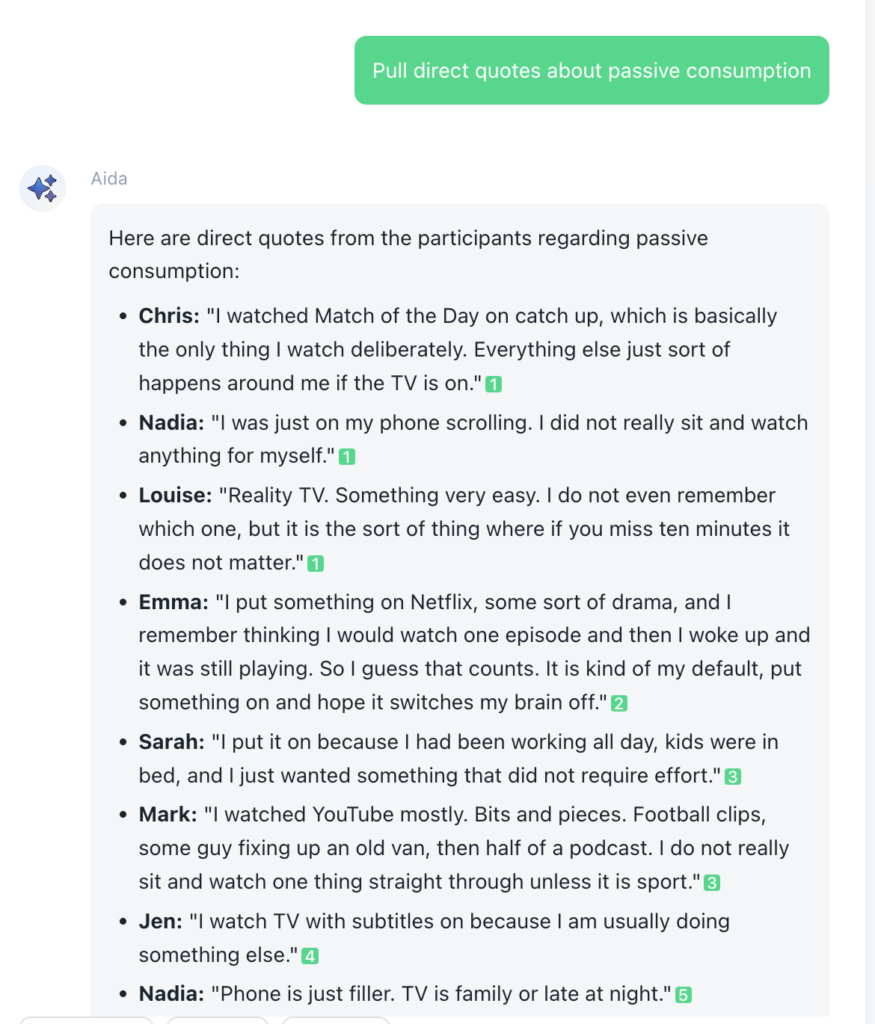

This is where many teams unintentionally introduce AI user bias in research, particularly when dealing with more generalist tools. Thankfully, Aida works on the Ground Truth principle, ensuring that every output is linked to a verbatim quote.

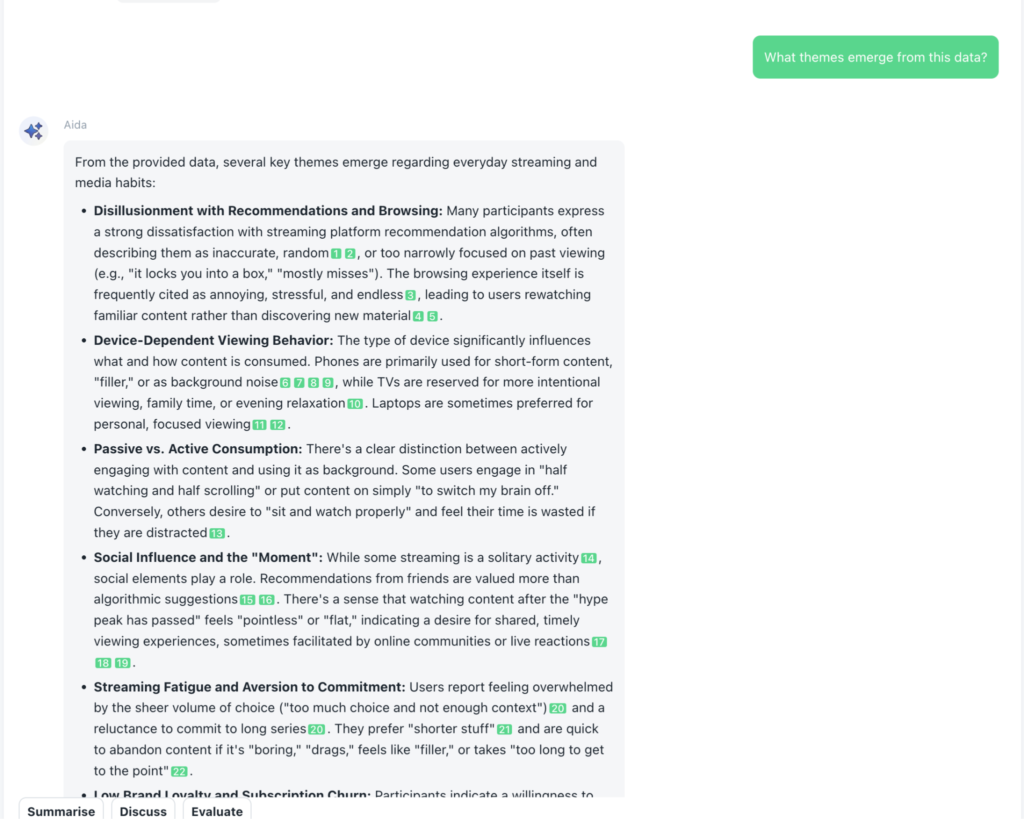

Even still, make sure that instead of asking leading questions, frame prompts in ways that open up interpretation. For example:

- “What themes emerge from this data?”

- “Where do participant views diverge?”

- “What evidence challenges the dominant narrative?”

- “What assumptions might a researcher bring to this dataset?”

You can also ask Aida to identify minority viewpoints or contradictions. This helps counter evaluation bias in AI by widening the analytical lens.

The aim is to treat AI as an analytical partner that probes, rather than a system that simply validates.

3. Go deeper, uncover layered themes

Once initial themes are generated, use the tool to go further in the data.

Ask Aida to:

- Break down themes by demographic or segment

- Identify emotional drivers beneath stated opinions

- Surface tensions or trade-offs within responses

- Compare early interviews with later ones

This is where having the AI allows researchers to move beyond surface-level clustering. Iterative prompting allows you to build depth in stages rather than accepting the first summary as complete.

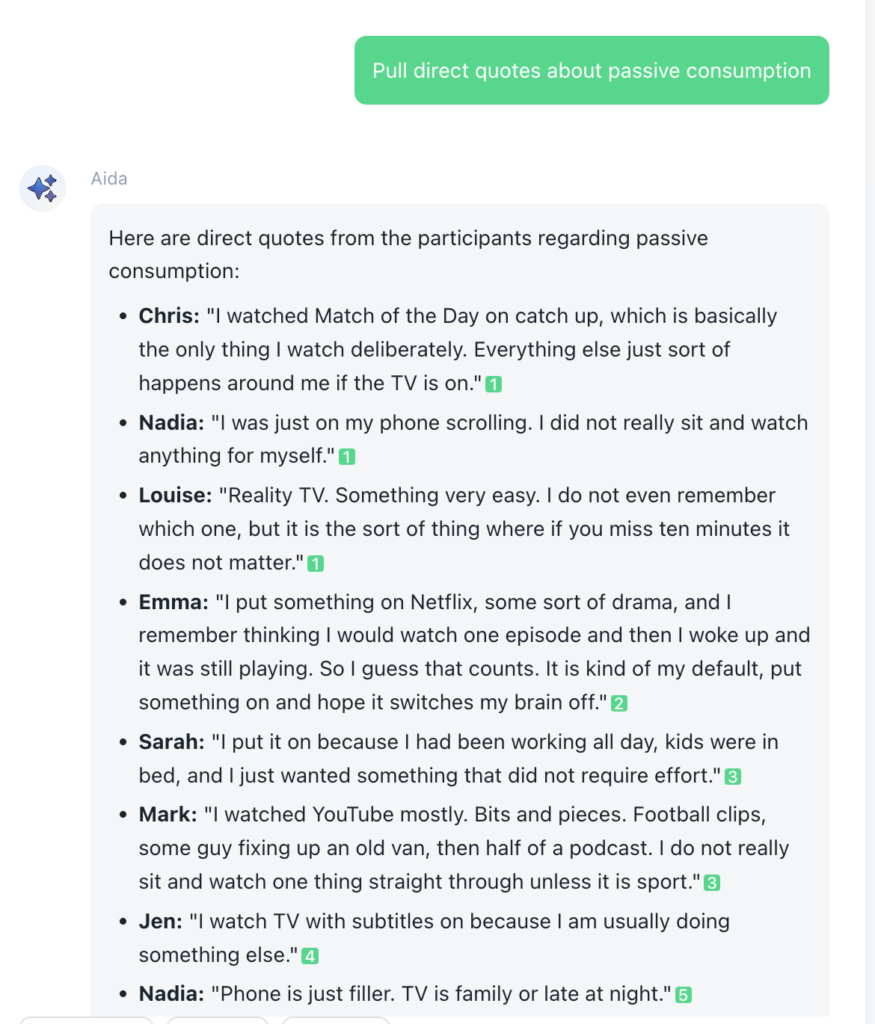

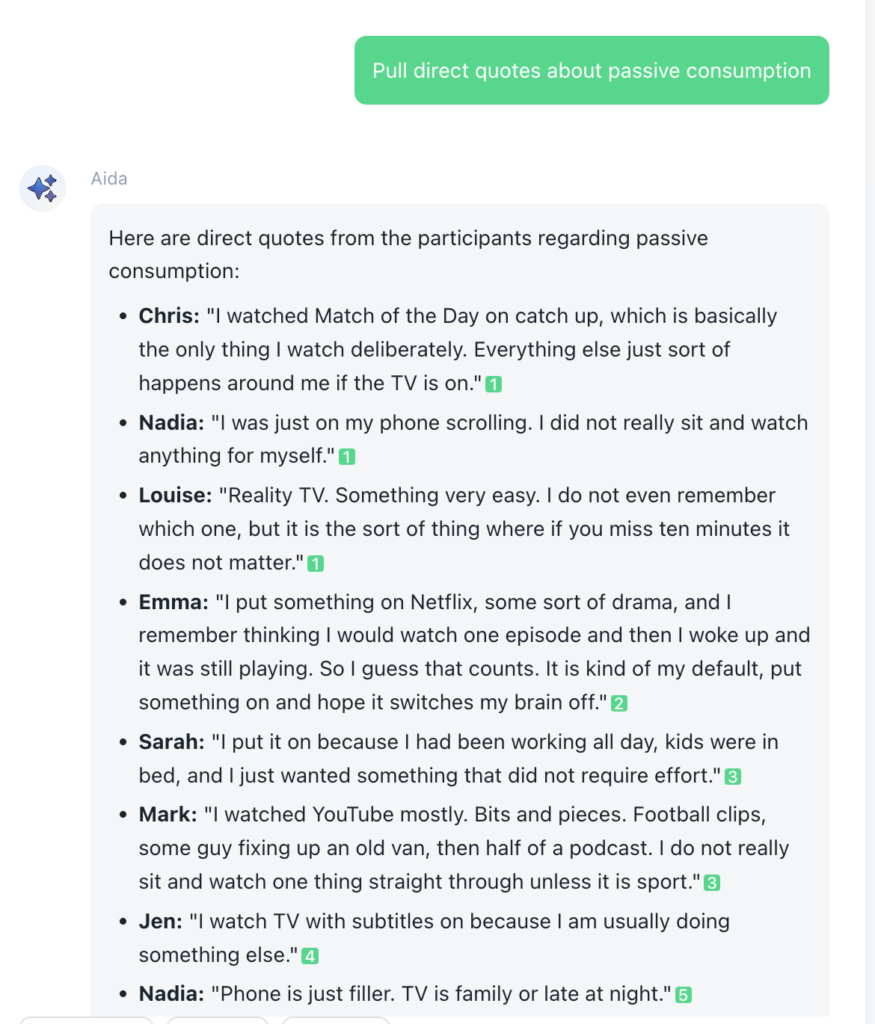

4. Trace every theme back to source material

If AI-generated themes cannot be traced back to source material, they should not be treated as findings.

Beings links themes and summaries directly to the transcript segments or responses they are derived from. That connection should be used deliberately, not treated as a background feature.

- Pull direct quotes.

- Re-read the surrounding context.

- Check whether tone, hesitation or emphasis shifts the meaning of a theme.

This is where the ground truth principle becomes operational. AI can cluster and summarise, but the transcript remains the authority. The participant’s words are the evidence.

Best practice for using AI in research means refusing to treat summaries as endpoints. Every theme should be anchored to what was actually said, so that nuance is preserved and findings remain defensible.

5. Complete the sandwich model before reporting

This is the second layer of the sandwich model, where human judgement returns before findings are shared.

Before insights leave your team, pause.

- Revisit your objectives.

- Review key transcripts.

- Sense check whether the framing of insights reflects the full dataset.

- Rewrite conclusions in language that reflects professional judgement rather than model phrasing.

The final output should carry the authority of the research team, not the tone of an

algorithm.

When used this way, Beings and Aida accelerate analysis while accountability remains firmly with the researchers.

Using AI in research demands a best practice approach

AI in research can reduce manual workload, surface patterns at speed and help teams move through qualitative data more efficiently. What it cannot do is replace professional judgement.

Best practice for using AI in research means treating it as part of a structured workflow. Data handling is understood from the outset. Prompts are framed carefully. Outputs are tested against ground truth. Human judgement sits before and after the analysis.

If you want to apply these principles in practice, Aida by Beings is built specifically for qualitative teams who need traceability, transparency and structured workflows embedded from the start. Every theme links back to source material. Context can be set before analysis begins. Outputs remain anchored to evidence.

You can explore Aida and try it for free to see how AI can support your research process without displacing accountability.