The best AI research assistant for research teams and agencies is the one that strengthens rigour rather than diluting it. Speed and context are things that can be pulled it very quickly using an AI research assistant now, but they need a system that respects participant data, makes it easy to trace findings back to source material, and supports critical thinking instead of smoothing over it.

An AI research assistant should help researchers think more clearly, not simply agree with whatever framing it is given.

Before choosing a platform, it helps to be clear about what an AI research assistant should actually do for a research team.

What is an AI research assistant?

An AI research assistant is a tool or chatbot designed to support qualitative research analysis by helping researchers review, organise and interpret large volumes of research more quickly.

In practical terms, that usually means uploading interview transcripts, focus group recordings or open text survey responses and asking the system to surface themes, summarise patterns or cluster responses. A good AI research assistant should also allow researchers to explore specific questions, compare segments and refine lines of enquiry without starting from scratch each time.

For research agencies, this can reduce turnaround time across multiple client projects. For in-house teams, whether in healthcare, education, technology or policy, it can mean faster access to insight without increasing headcount.

A true AI research assistant should do more than generate summaries. It should work within a structured project environment, allow researchers to add context about objectives and methodology, and make it clear how conclusions are drawn from the underlying data. If those foundations are missing, the output may look polished but lack substance.

What research teams actually need from an AI research assistant

Choosing the best AI research assistant starts with setting clear criteria. Here is what research teams should look for from their AI research assistant:

1. Clear data handling

Research data is rarely neutral material. Client interviews, patient feedback and stakeholder discussions often contain personal context and commercially sensitive insight. These are not documents that can be casually pasted into a public AI tool.

Before using AI for qualitative research analysis, research teams need clear answers to a few basic questions:

- Where is project data processed and stored?

- Is any of it used to train the model?

- Who has access to it?

- Can projects be separated so client data never mixes?

This is where the difference between general AI tools and research environments becomes apparent.

ChatGPT is a public, cloud-based system designed for general tasks. Text entered into it is transmitted to a remote infrastructure where models generate responses. Business plans may prevent inputs from being used for training, but the data is still processed within third-party cloud infrastructure and outside the researcher’s direct control.

Beings takes a different approach. It operates as a private research environment where AI works inside defined project spaces. Research material stays within the project corpus provided by the researcher and is not used to train shared models.

If an AI research assistant cannot clearly explain how data is handled, that is a risk. Researchers should understand exactly what happens to participant information once it is uploaded.

2. Protection against AI user bias

Large language models are trained to be helpful to the user, but that can mean they mirror the framing or assumptions within a prompt rather than challenging them. In research, this can be a problem that leads to AI user bias.

If a researcher asks, “Why were participants frustrated with the service?” a generic AI system may focus only on frustration, even if the transcripts contain positive sentiment. The result feels coherent, but it may quietly reinforce the researcher’s initial framing.

A strong AI research assistant should help surface what is actually present in the data, not simply reflect what the user expects to find.

3. Source linked outputs

Insight without evidence is opinion. Research teams need to be able to trace themes back to direct quotes, check how a conclusion was formed, and validate whether the summary accurately reflects participant language. If a tool produces clean paragraphs without showing the underlying source material, it becomes difficult to defend findings internally or with clients.

4. Designed for qualitative research

Many teams are experimenting with general-purpose AI tools. They can summarise text, but they are not structured around qualitative workflows.

A purpose-built AI research assistant should support:

- Project-based organisation

- Uploading research context and objectives

- Transcript-level interrogation

- Iterative questioning

- Transparent theme development

Without that structure, researchers are left stitching together prompts and hoping the output remains consistent across projects. The best AI research assistant is the one that meets these standards consistently

What are the best AI research assistants in 2026?

In 2026, most research teams are choosing between general purpose AI tools and platforms built specifically for qualitative analysis. The right choice depends on how much structure, data control and auditability your work requires.

Here are how three of the most commonly used options compare.

ChatGPT and other LLMs

ChatGPT is one of the most widely used AI tools globally, and many research teams use it to summarise transcripts, explore early themes or draft reports. Other generalist large language models, such as Claude, are also used in similar ways.

Pros

- Fast summarisation

- Flexible prompting

- Useful for brainstorming and early-stage synthesis

- Familiar interface

Cons

- Not designed specifically for qualitative research workflows

- No built-in project structure for client segmentation

- Does not automatically link themes back to transcript sources

- Can mirror the framing of the prompt

- Lack of data governance

Because it is so widespread, it may feel like ChatGPT would be the best tool to approach as an AI research assistant in 2026 but this may not be the most sensible option. For more information as to why, take a look at our Beings AI vs ChatGPT comparison, While general LLMs can act as capable drafting assistants, the responsibility for structure, data governance and traceability sits entirely with the researcher.

Beings

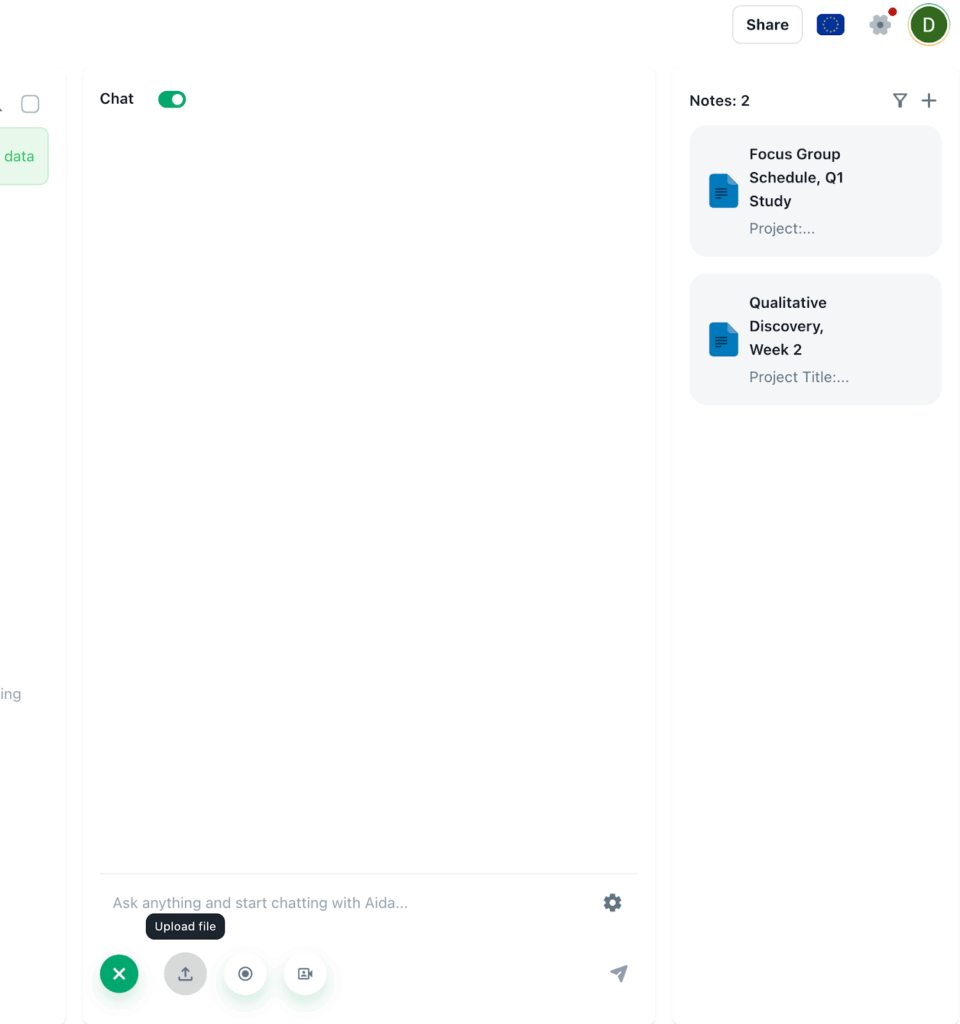

Beings is built specifically as an AI research assistant for qualitative research teams and agencies. Rather than layering AI onto a traditional coding system or relying on a general purpose chatbot, it is structured around research projects from the outset.

Researchers create a project, upload transcripts and add research context such as objectives, audience and methodology. That context remains attached to the work, which reduces repetition and keeps analysis grounded.

Aida, the AI within Beings, surfaces themes directly from the transcripts and links findings back to source material. You can move from a summary to the exact participant quotes behind it, making it easier to validate interpretations and defend conclusions with clients or internal stakeholders.

The platform is designed for interrogation as well as synthesis. Researchers can probe themes, challenge early conclusions and refine questions within the same project environment, rather than copying text between tools.

Pros

- Built specifically for qualitative research

- Project based structure with attached context

- Source linked findings for traceability

- Designed for iterative theme interrogation

- Clear data boundaries

Cons

- Focused on qualitative research rather than general content creation

- Not intended as a broad productivity assistant

For teams handling sensitive participant data and needing defensible insight, Beings positions AI as a structured research assistant rather than a general drafting tool.

Choosing the right AI research assistant in 2026

The right AI research assistant depends on what your team is accountable for.

General LLMs such as ChatGPT can support drafting and exploratory thinking. They are accessible and flexible. This does not automatically make them appropriate environments for live, qualitative research data.

Participant interviews often contain personal experiences, health information, commercially sensitive insight and identifiable language. Uploading that material into a general-purpose AI system without strict governance review introduces risk. Ultimately, convenience should not outweigh data responsibility.

If you need a purpose-built AI research assistant that combines structured projects, source-linked outputs and clear data boundaries, Beings is designed for that role.

To see how a purpose-built AI research assistant works in practice without the need to learn an entire desktop-based software, you can try Beings for free and explore your own transcripts inside a structured, secure project environment.