AI in risk management handles various tasks such as pattern detection in large data sets, risk report drafting and reconciliation of data and information across systems. What it can’t do is take on the judgment, accountability, or regulatory defensibility that risk work actually requires. For finance teams exploring where AI fits, there’s a balance between using AI to speed up the manual analysis needed within risk management, alongside human-in-the-loop judgement calls and checks.

What type of AI is being used in risk management?

Risk management isn’t one workflow. It’s a dozen different ones, stitched together across operational risk, credit risk, model validation, financial crime, and regulatory compliance. AI shows up differently in each, but a handful of applications are common across most finance teams experimenting with it:

AI for basic financial analysis and pattern detection

At the simplest level, AI tools are being used to crunch large data sets and surface patterns a human analyst working in risk management would take days to work through. Trend detection across transaction histories. Correlation analysis across portfolios. Flagging outliers in trading activity. These are jobs AI does faster than any analyst, with outputs that can be checked against underlying data.

AI for drafting investment briefs and risk reports

The other common use of AI in risk management workflows is drafting documents. A risk team produces a high volume of documents across the cycle, from credit memos and investment committee briefs to quarterly risk reports. AI can produce the first draft of most of these faster than a junior analyst, taking the structured inputs your team already works with and turning them into a usable narrative.

The drafts always need editing. For an investment committee brief, the structure is usually sound but the framing sometimes needs tightening, and the numbers need checking every time before they go anywhere near a committee. For a quarterly risk report, the core mechanics, pulling the right inputs into the right structure, are stable enough that the draft is usable as a starting point, even if the narrative requires a human hand before sign-off.

AI for data reconciliation and spreadsheet analysis

Anyone who’s worked in risk knows how much time disappears into spreadsheets. Reconciling data between two systems that don’t quite agree. Writing Excel formulas that nobody else will understand in six months. Chasing down why one number in a report doesn’t match the same number in a different report.

AI tools can take on a lot of this. They’ll write a formula, explain what an existing formula does, or produce a working Python script for a repetitive task. For analysts who didn’t come up through a coding background, this lowers the barrier to automating work that used to be manual.

The limitation is that AI-generated code and formulas still need checking. A formula that looks right and returns a plausible number isn’t the same as a formula that’s actually right. For regulated reporting, that distinction matters.

Document review across policies, filings, and audit evidence

A lot of risk work involves reading through documents to find specific things. Policies, regulatory filings, third-party contracts. AI can do the first pass, surfacing the clauses or anomalies a human would otherwise hunt for manually.

Anomaly flagging in large data sets

Anomaly detection is one of the oldest uses of machine learning in finance, and still one of the most effective. A model trained on normal transaction patterns flags the ones that deviate. The same logic catches the quarterly report where the numbers don’t quite fit the pattern. For teams monitoring fraud, financial crime, or market abuse, AI catches things a human reviewer would miss because there’s too much data to work through.

These models are the foundation for most fraud detection, financial crime monitoring, and market abuse systems in risk management practices today.

Where AI is actually helping risk teams today

Most finance teams experimenting with AI in risk don’t report dramatic transformations. They report modest, specific gains in three main places:

Faster first passes on long documents

The most consistent win reported by risk management analysts is time saved on the first read. Policy documents, regulatory updates, counterparty financial filings, the kind of material that used to need an analyst blocked out for an hour or two, can now be summarised, flagged, and ready for review in minutes. The analyst still reviews the output, but they’re reviewing a structured first pass rather than reading cold.

Cleaning up reporting and audit workflows

AI is quietly useful on the grind work that sits around reporting and audit cycles. Drafting the standard sections of a recurring report. Cross-checking numbers between source systems and the final document. Producing the routine explanations that used to eat junior analyst time. Teams using AI well, tend to be freeing up their best people from the work that doesn’t need them.

Surfacing anomalies for human review

The third area is volume triage. Finance teams sit on more data than any human can review. AI narrows the stack, here are the 40 transactions that look unusual, here are the 12 audit findings that warrant a closer look. The value comes from the narrowing. With the help of AI tools, a human reviewer in risk spends their time on the 40, not the 40,000.

What are the downsides of using AI in risk management?

The case against AI in risk isn’t that it doesn’t work. It’s that it doesn’t always work in ways that matter. For finance teams operating under regulatory scrutiny, those failure modes need to be understood before deployment, not after. Here are some of the pitfalls when using AI in risk management:

Hallucinations and fabricated outputs

AI models generate plausible-sounding output even when the underlying data doesn’t support it. In risk management, that can mean a summary that cites a clause from a regulation that doesn’t exist, a risk report with a statistic the model invented, or a reconciliation that overlooks the real discrepancy and flags a fabricated one instead. For low-stakes drafting, these errors are an acceptable cost of speed. For anything that ends up in a regulatory filing or an audit response, they’re not.

Governance and regulatory requirements

Risk management operates under regulatory frameworks that don’t bend to accommodate new tools. The FCA, the PRA, and their equivalents globally expect firms to demonstrate that decisions are defensible, that controls are effective, and that models in use are validated. AI tools drop straight into this environment, which means they need the same documentation, testing, and oversight as any other model. Most vendors’ compliance pitches don’t survive first contact with an actual model validation team.

Data security and sensitive information handling

Risk data is some of the most commercially and personally sensitive information a firm holds. Transaction records, client details, counterparty exposures, internal assessments. Sending that data to a general-purpose AI tool, particularly one that processes data outside the UK or EU, or retains prompts for model training, creates a data protection exposure that can outweigh any time saved. Finance teams working under UK GDPR need to know where their data goes, how long it stays there, and who else can access it.

Misalignment across individuals, teams, and companies

The lesser realised downside when using AI in risk management is organisational. AI adoption in most finance teams to date has been informal. Individual analysts trying tools on their own workflows, teams adopting platforms without telling the central function, and leadership announcing AI strategies without consulting the people who’d use them. The result is a patchwork: inconsistent use of different tools, data flowing to places nobody has signed off on, and no shared playbook for what good AI use actually looks like. Reddit threads from risk and internal audit teams repeatedly flag this as a bigger problem than any single tool’s accuracy.

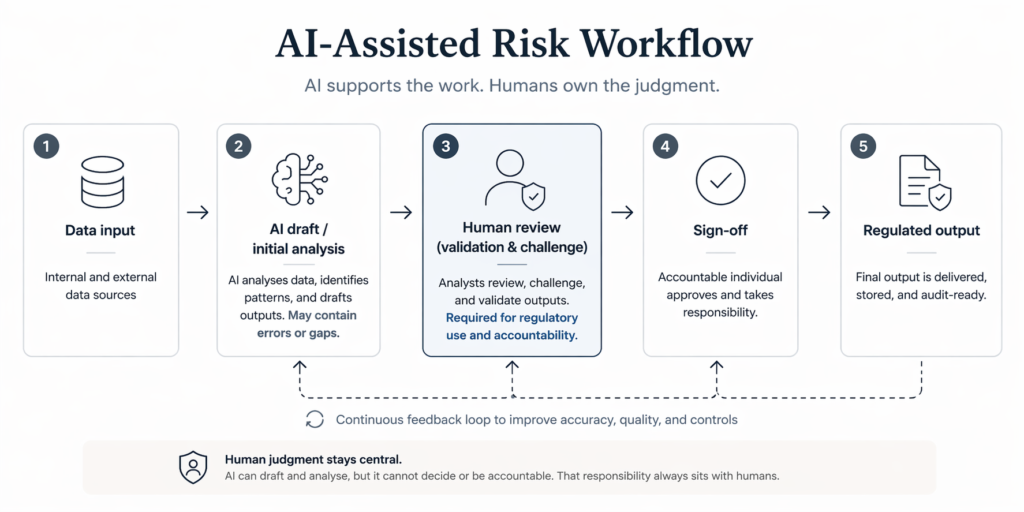

Why human judgment stays central

Put the downsides together and the conclusion follows. AI in risk management is a useful tool, but the judgment, accountability, and defensibility that risk work actually requires has to stay with humans. The structures around risk work don’t allow the alternative, regardless of how capable AI becomes.

Regulators expect humans in the loop

Regulated firms can’t point at an AI output and call it a decision. The FCA, the PRA, and their equivalents require demonstrable human oversight of decisions with material impact. A model can inform the call, but it can’t be the call. Any firm trying to streamline the review step out of its workflows is heading for a difficult conversation with a supervisor.

The cost of a single “AI fat finger”

Risk exists because things go wrong at scale when nobody’s watching. A single AI-driven error that feeds into a filing, a capital calculation, or a client communication can cost a team years of hard-won trust with a regulator or counterparty. The more mechanical work AI takes on, the more important it becomes that a human reviews the outputs that leave the team.

AI as a staff analyst, not a decision-maker

The most useful framing for AI in risk work is to treat it like a capable but unaccountable staff analyst. It can produce drafts, run analyses, and surface patterns faster than anyone else on the team. But every output still needs a human review and a human signature before it goes anywhere that matters. That’s how risk work operates, regardless of how good the tools get.

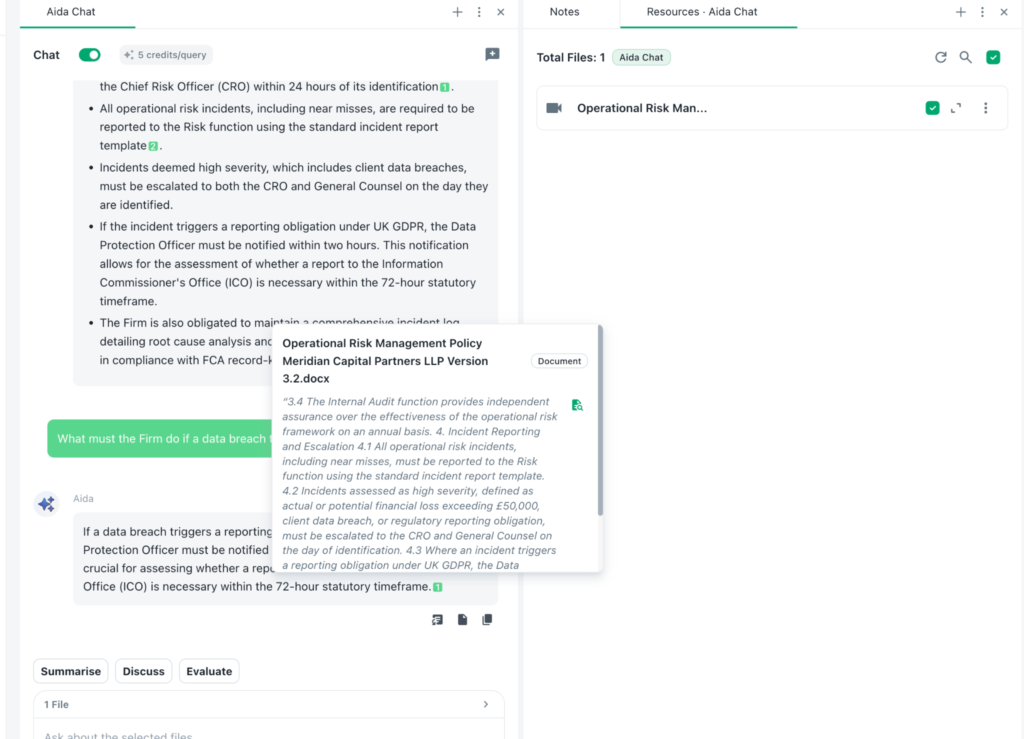

Using Beings for risk and compliance work

For finance teams looking for an AI tool that takes the regulatory, security, and accuracy bar seriously, Beings is built with those concerns at its core. Aida, Beings’ AI co-partner for analysis, operates on a different principle from a general LLM. Every output traces back to source material, cited and directly accountable, and nothing gets invented in the gaps.

Under the Ground Truth principle that Beings is built on, Aida only works with what’s actually in your documents, which means outputs that stand up to the kind of scrutiny regulators and internal audit teams apply. That matters for risk work specifically. A summary that can be audited back to the exact policy or filing is usable evidence. A reconciliation that points to the specific discrepancy triggering it can be checked and signed off.

Beings also fits the operational realities of UK finance teams. Data is processed in the UK, which matters for teams working under UK GDPR or handling regulated material. Pricing is flat-rate across a team, so cost doesn’t gate access when the tool needs to be rolled out widely. Aida works alongside your team as a co-partner, not a replacement for the judgment, accountability, and sign-off that risk work requires.

If your team is handling sensitive, regulated, or evidence-heavy work, Beings is built for that job. Try Beings for free and see how Aida works alongside your risk and compliance team.